We left off our discussion of regular languages with dashed hopes when we learned that ![]() is a regular language if and only if

is a regular language if and only if ![]() is finite. This means that dealing with

is finite. This means that dealing with ![]() , the set of words on the generators of

, the set of words on the generators of ![]() which are trivial in

which are trivial in ![]() , will never help us resolve the word problem because if

, will never help us resolve the word problem because if ![]() is finite, we already know we can solve the word problem with the Cayley graph!

is finite, we already know we can solve the word problem with the Cayley graph!

Fortunately all is not lost. The new plan of attack is to identify the situations in which a normal form for some group ![]() is also a regular language. In these cases we may be able to use some set of FSA’s to relate a word on the set of group generators to the normal form for the group element that the word reduces to (although ultimately, as we will see, we won’t even need an FSA that accepts a normal form for

is also a regular language. In these cases we may be able to use some set of FSA’s to relate a word on the set of group generators to the normal form for the group element that the word reduces to (although ultimately, as we will see, we won’t even need an FSA that accepts a normal form for ![]() ).

).

First we should see an example of a group with a normal form which is also a regular language.

Definition: A regular normal form of a group ![]() is a normal form which is also a regular language.

is a normal form which is also a regular language.

Example:

Let ![]() We can set a normal form for this group, namely

We can set a normal form for this group, namely

![]()

It is possible to build an FSA that accepts exactly this set as its language, illustrated below.

With this concept in hand, we can formulate a few interesting results.

Theorem: If ![]() and

and ![]() both have a regular normal form then so do

both have a regular normal form then so do ![]() and

and ![]() .

.

The proof will be assigned, so suffice it to say that it involves some ideas about combining regular languages that we’ve seen before. Before moving on to the word problem, we can squeeze a little more juice out of the concept of a regular normal form. A regular language is, for lack of a more appropriate phrase, a set of words which has a high degree of structural regularity. For this reason we suspect that a group which permits a normal form with this structure is “nice” in some basic way. For one thing, the set of nodes and edges in an FSA is finite, so every regular language will be finite or will have to somehow make use of cycles in the graph of its FSA. To put this into rigorous terms, we recall the pumping lemma.

Theorem (The Pumping Lemma): Let ![]() be regular, then there exists

be regular, then there exists ![]() such that for all words

such that for all words ![]() with

with ![]() , we can find

, we can find ![]() with

with ![]() such that

such that ![]() and

and ![]() for all

for all ![]() .

.

The proof worked via pigeonhole by setting ![]() , where

, where ![]() is the FSA that accepts

is the FSA that accepts ![]() .

.

With this in mind we note that if ![]() is in infinite group with a regular normal form, then we can use the cycles in the corresponding FSA to generate an element of

is in infinite group with a regular normal form, then we can use the cycles in the corresponding FSA to generate an element of ![]() with infinite order.

with infinite order.

Theorem: If ![]() has a regular normal form and

has a regular normal form and ![]() is infinite, then

is infinite, then ![]() contains an element of infinite order.

contains an element of infinite order.

Let ![]() be the FSA representing

be the FSA representing ![]() . Let

. Let ![]() satisfy

satisfy ![]() . Here the absolute value sign denotes the word length of the representative of

. Here the absolute value sign denotes the word length of the representative of ![]() in the regular normal form. We may take a word whose word length is greater than any integer, specifically

in the regular normal form. We may take a word whose word length is greater than any integer, specifically ![]() , because

, because ![]() is infinite and therefore its normal form is infinite. Let

is infinite and therefore its normal form is infinite. Let ![]() be the natural transformation that sends words to group elements. By an argument much like the proof of the pumping lemma, we note that the path which yields the word for

be the natural transformation that sends words to group elements. By an argument much like the proof of the pumping lemma, we note that the path which yields the word for ![]() must contain a loop, therefore we have

must contain a loop, therefore we have

![]()

Where ![]() is given by a path from the start state to some vertex,

is given by a path from the start state to some vertex, ![]() ,

, ![]() is a loop that starts and ends at

is a loop that starts and ends at ![]() , and

, and ![]() is a path that begins at

is a path that begins at ![]() and ends at some accept state. We can see that

and ends at some accept state. We can see that ![]() must also be accepted for all

must also be accepted for all ![]() , so because this language is a normal form, we have that for

, so because this language is a normal form, we have that for ![]() ,

,

![]()

Since ![]() is a homomorphism, we then have

is a homomorphism, we then have

![]()

This means that the element ![]() must have infinite order, which is all to say that if

must have infinite order, which is all to say that if ![]() has a regular normal form, then

has a regular normal form, then ![]() is not infinite torsion.

is not infinite torsion.

Permitting a regular normal form is a great property for a group to have, but we already get the feeling that it is quite specific, and it is possibly difficult to prove whether a group even has it. We will not use these normal forms explicitly in our solution of the word problem, but we will retain the general idea of making an FSA work for us to produce words with useful properties. This is part of what motivates the following definition.

Definition: Let ![]() be a group. We say

be a group. We say ![]() has an automatic structure if there exists the following set of FSAs

has an automatic structure if there exists the following set of FSAs

where the language

where the language  surjects onto

surjects onto  under

under  , namely ever element of

, namely ever element of  is represented by some word. This is FSA is called the word acceptor.

is represented by some word. This is FSA is called the word acceptor. where

where  . This FSA is called the equality checker.

. This FSA is called the equality checker. where

where  . This is the word comparator.

. This is the word comparator.

A few examples are in order to unpack this definition. First we draw out all the FSA’s for the example ![]() .

.

We have only drawn ![]() since

since ![]() would look very similar. In general we do not necessarily have that the language given by

would look very similar. In general we do not necessarily have that the language given by ![]() is necessarily a normal form for

is necessarily a normal form for ![]() , but in this case we do, which means that drawing

, but in this case we do, which means that drawing ![]() is easy as we can replace every edge

is easy as we can replace every edge ![]() with the edge

with the edge ![]() and so on, since every word uniquely represents a group element, so

and so on, since every word uniquely represents a group element, so ![]() implies that

implies that ![]() and

and ![]() are the same word. We will see this at play in the next example, where

are the same word. We will see this at play in the next example, where ![]() . Here we will omit

. Here we will omit ![]() since we understand it’s structure, and again only draw

since we understand it’s structure, and again only draw ![]() for reasons of symmetry.

for reasons of symmetry.

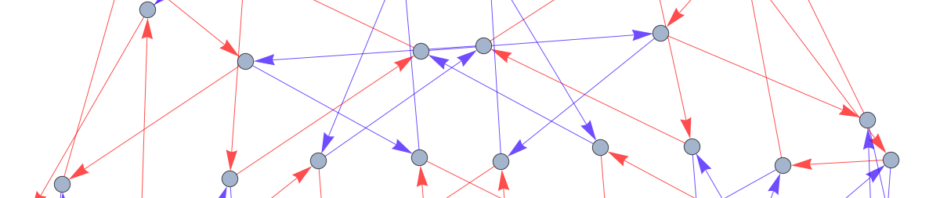

Drawing ![]() once we have

once we have ![]() is not too large a task. In the above example, the orange part of the graph is exactly what

is not too large a task. In the above example, the orange part of the graph is exactly what ![]() would be, where the purple arrows turn this graph into the graph of

would be, where the purple arrows turn this graph into the graph of ![]() . Further examples of groups with automatic structure include all free groups and all free abelian groups, all finite groups, many coxeter groups, all braid groups, hyperbolic groups, and the special linear group over

. Further examples of groups with automatic structure include all free groups and all free abelian groups, all finite groups, many coxeter groups, all braid groups, hyperbolic groups, and the special linear group over ![]() for

for ![]() . A few groups which do not have automatic structure are infinite torsion groups, special linear groups over

. A few groups which do not have automatic structure are infinite torsion groups, special linear groups over ![]() with

with ![]() , and most Baumslag-Solitar groups.

, and most Baumslag-Solitar groups.

We are now in a position to exhibit the crown jewel of our discussion.

Theorem: If ![]() is automatic then

is automatic then ![]() has solvable word problem.

has solvable word problem.

Let ![]() . Pick some accepted word

. Pick some accepted word ![]() . For each letter

. For each letter ![]() , we will use the automata that make

, we will use the automata that make ![]() automatic to find an accepted word for

automatic to find an accepted word for ![]() .

.

We take the word comparator automaton ![]() and observe that we can take it to have no two edges leaving the same vertex with the same label by arguments we’ve made before. By definition

and observe that we can take it to have no two edges leaving the same vertex with the same label by arguments we’ve made before. By definition ![]() accepts pairs

accepts pairs ![]() such that

such that ![]() . Sweeping a few things under the rug, we argue that we can follow the path that gives

. Sweeping a few things under the rug, we argue that we can follow the path that gives ![]() in

in ![]() and read off the word word

and read off the word word ![]() , where we are guaranteed

, where we are guaranteed ![]() . This is an algorithm which gives us an accepted word for

. This is an algorithm which gives us an accepted word for ![]() .

.

The key idea is that we can use this algorithm generate an accepted word for every element of ![]() . There is some word in the language

. There is some word in the language ![]() which is the identity in

which is the identity in ![]() , so just take

, so just take ![]() to be this word. Now we can express every element of

to be this word. Now we can express every element of ![]() as

as ![]() where

where ![]() are letters in our generating set. Note that

are letters in our generating set. Note that ![]() . We can apply the above algorithm iteratively, first to get an accepted word for the element

. We can apply the above algorithm iteratively, first to get an accepted word for the element ![]() , and after

, and after ![]() repetitions to get the element

repetitions to get the element ![]() . Call this accepted word

. Call this accepted word ![]() . Since we know

. Since we know ![]() , to solve the word problem we need to know if

, to solve the word problem we need to know if ![]() . Fortunately we have a finite state automaton that tells us exactly this! We simply follow the path for

. Fortunately we have a finite state automaton that tells us exactly this! We simply follow the path for ![]() and see if the pair is accepted.

and see if the pair is accepted.

I like your post! It helped me understand using FSA to solve the world problem. I have a heuristic understanding of the automatic structure. Indeed, we recall that to solve a word problem, we need a Cayley graph to tell how to relate different combinations of generators and use the path to test out things. Thus, the FSA m_G plays the role of vertices of the Cayley graph but allows extra elements. Then, the FSA m_a’s tells us how to travel from one vertex to another. I always imagine them playing the rule of gluing things together into a “graph,” like Cayley graphs. Finally, the FSA m_= tells what paths are “equivalent.”

Horace, this is a wonderful post and it helped me a ton when I was trying to understand automatic structure for the recent problem set! I wonder if we ever actually need to represent the word comparator and equality checker FSAs for every element of the language or is that simply the formal definition of the automatic structure and it is always OK to just show everything for a single element.

Great post! Your pictures are large enough to be very clear, which I know is not always easy to do. I’m still not quite clear on how we generate the different FSAs to show that a group is automatic. How do we get from the first one to the other ones?